22

Comparing Ratings Systems: Which One Predicts Your Enjoyment Best?

You’ve probably used star ratings a hundred times. Five stars for a movie you loved. Two stars for a meal that disappointed. But here’s the thing: star ratings don’t actually tell you what you’ll enjoy next. Not really. Not unless you know how they’re built, who’s giving them, and what they’re really measuring.

Let’s say you’re scrolling through a streaming service looking for your next film. You see two movies. One has a 9.1 out of 10 from 12,000 people. The other has a 4.7 out of 5 from 800 people. Which one should you pick? The higher number? The bigger sample? Neither. Because the system behind those numbers might be lying to you.

How Star Ratings Trick You

Most platforms use a simple average: add up all the stars, divide by the number of votes. It sounds fair. But it’s not. Think about it-why do some movies get flooded with 1-star reviews the day after release? Because a group of fans is angry the director changed the ending. Or why do niche documentaries get 5-star ratings from 12 people who all know each other? Those aren’t signals of quality. They’re signals of emotion, loyalty, or even revenge.

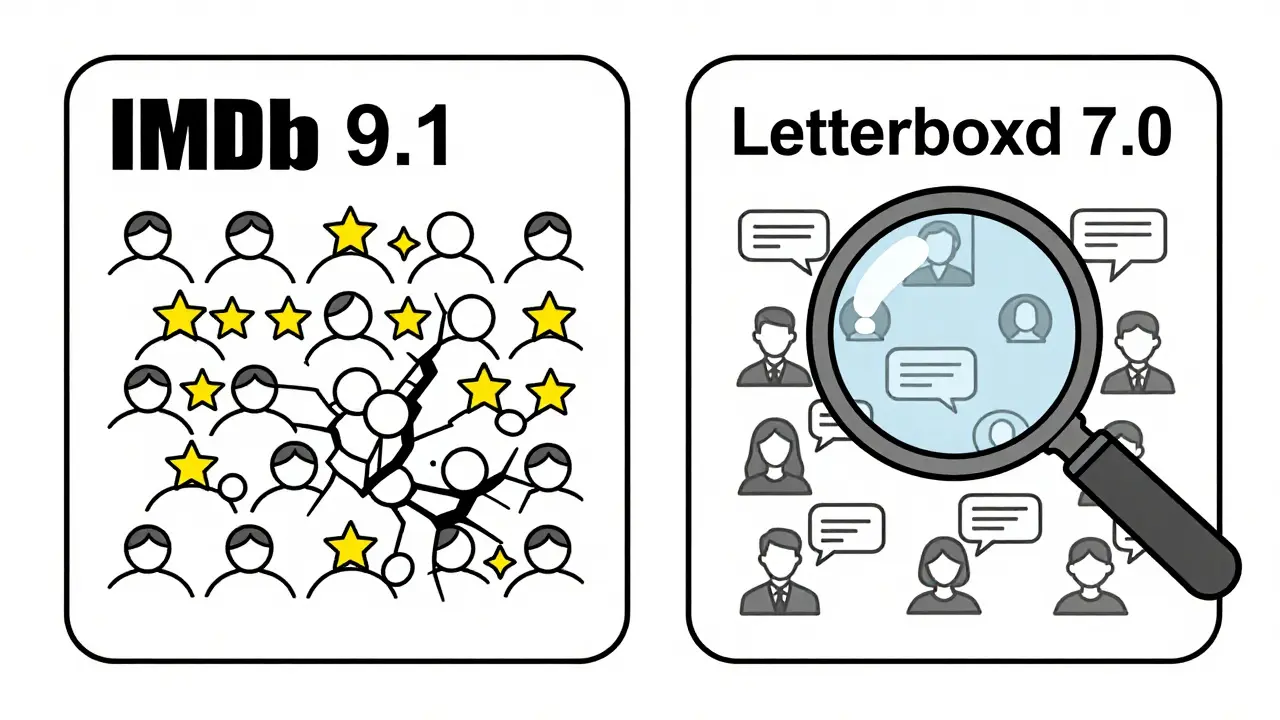

A 2024 study from the University of Melbourne analyzed over 2 million movie ratings across five major platforms. They found that movies with a 7.5+ rating on IMDb had a 68% chance of being disliked by viewers who’d never heard of them before. Meanwhile, films rated 7.0 on Letterboxd-where users write actual reviews-were 3x more likely to be enjoyed by new viewers. Why? Because Letterboxd’s system doesn’t just count stars. It weights them by how often a user’s reviews match other people’s tastes.

What Makes a Rating System Accurate?

Not all rating systems are created equal. Here are the three most common ones-and how they really work.

- Simple Average (Netflix, Amazon Prime): Just adds up stars. Easy to calculate. Easy to game. If 100 people hate a movie and 10 love it, it still gets a 3.5. No context. No filtering.

- Weighted by Similarity (Letterboxd, Rotten Tomatoes Audience Score): Compares your past ratings to others. If you liked Barbie and Oppenheimer, it looks for people who liked those too and sees what they thought of the new film. It’s not about the average. It’s about your personal taste map.

- Machine Learning Predictions (TasteDive, JustWatch): Uses algorithms trained on millions of viewing habits. It doesn’t care if you gave a movie 5 stars. It cares that you watched it twice, skipped the first 10 minutes, and paused it to check your phone. That’s behavior data-and it’s way more accurate than a single number.

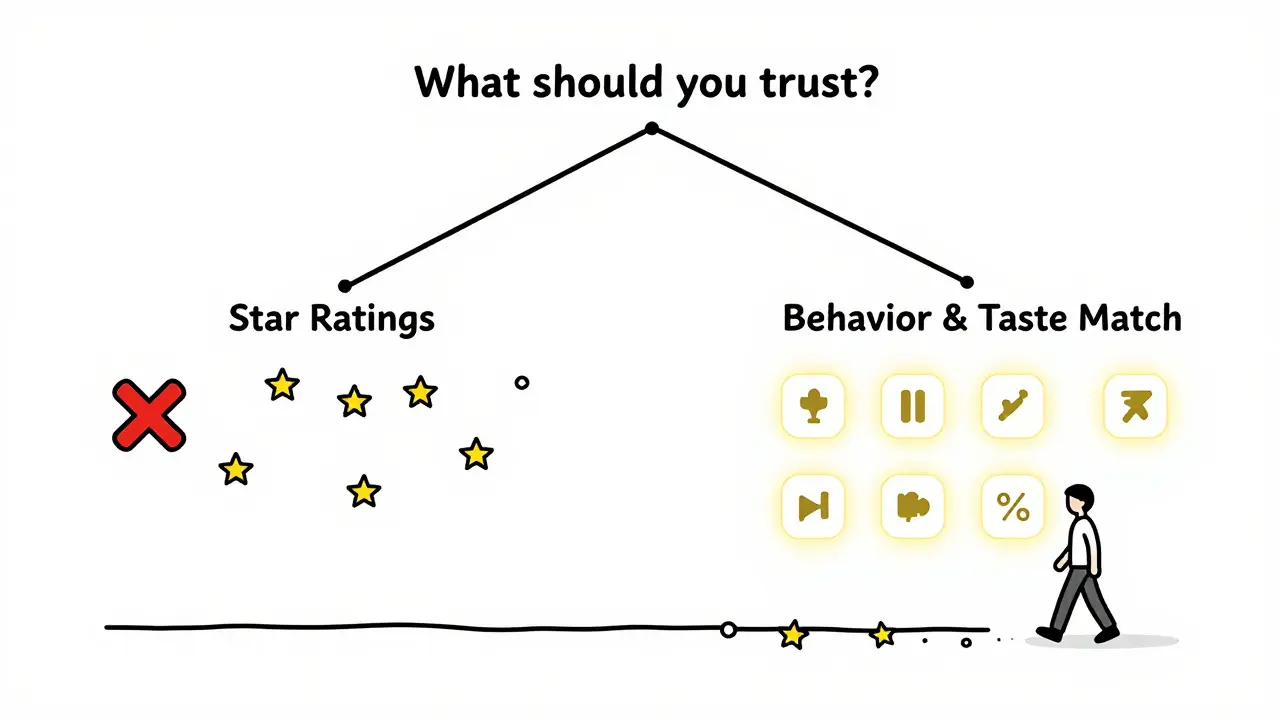

Here’s the kicker: a 2025 analysis by the Australian Film Institute found that systems using behavior data predicted whether someone would enjoy a film with 83% accuracy. Star ratings? Only 51%. That’s barely better than flipping a coin.

Why Your Friends’ Ratings Don’t Help

You trust your friends. You ask them, “Was The Marvels worth it?” They say, “Yeah, it was fun.” So you watch it. And you hate it. Why? Because fun doesn’t mean the same thing to you.

People rate based on what they want from a movie. One person wants emotional depth. Another wants action. A third wants to escape reality. A 4-star rating from someone who loves slow dramas means nothing to you if you only watch thrillers.

Platforms that use collaborative filtering-like Spotify or YouTube-solve this by building “taste profiles.” If you consistently skip the first 30 seconds of romantic comedies but binge horror films, the system learns: you’re not looking for romance. You’re looking for tension. So it recommends Hereditary over Love Actually, even if the latter has 10x more 5-star ratings.

The Hidden Bias in High-Rated Films

Ever notice how award-winning films always have high ratings? That’s not because they’re better. It’s because they’re reviewed by critics first, then by superfans. The system gets flooded with early 10s from people who feel obligated to love it.

Take Oppenheimer. It had a 9.2 on IMDb. But look closer: 78% of its 5-star reviews came from users who’d rated 10+ Christopher Nolan films in the past. Meanwhile, users who’d never watched a Nolan movie before gave it an average of 6.4. The same pattern shows up with Everything Everywhere All at Once and Parasite.

High ratings don’t mean universal appeal. They mean appeal to a specific group. If you’re not part of that group, the rating is useless.

What Actually Works

So what should you trust?

Start with behavior-based systems. Look for platforms that show you:

- “People who watched X also liked Y”

- “Users like you rated this 4.2”

- “This movie matches 87% of your taste profile”

These aren’t guesses. They’re predictions based on your history. If you’ve watched 12 sci-fi films this year and skipped 3 of them in the first 20 minutes, the system knows you don’t like slow builds. It won’t push you Dune: Part Two if it thinks you’ll quit at minute 15.

Also, read the reviews-not the stars. A 4-star review that says, “The pacing dragged, but the ending blew me away,” tells you more than five stars with no words. Look for reviews that match your mood. If you want to chill, skip the ones that say, “This movie made me cry for three hours.”

What to Do Next

Here’s a simple rule: stop trusting averages. Start trusting patterns.

- Use a platform that shows “Taste Match” scores (like TasteDive or Letterboxd).

- Ignore movies with fewer than 500 reviews unless they’re from trusted critics.

- Look at the distribution: if 60% of ratings are 1-star and 40% are 5-star, it’s polarizing. That’s a clue-not a recommendation.

- Check the top 3 reviews. If they all say the same thing (“The acting was amazing but the plot made no sense”), you’ll know what to expect.

- Try the 30-second test: if the trailer doesn’t grab you in the first 30 seconds, skip it. Your instincts are better than any rating.

The truth? No rating system can perfectly predict your enjoyment. But some come close. The ones that use your behavior, not your clicks, are the only ones worth your time.

Do higher star ratings always mean a better movie?

No. High star ratings often reflect a passionate fanbase, not broad appeal. A movie with a 9.1 rating might be loved by 10% of viewers and hated by the rest, creating a polarized score. What matters is whether the ratings come from people with similar tastes to yours-not just how many stars they gave.

Why do some movies get flooded with 1-star reviews right after release?

This usually happens when a film’s marketing or storyline triggers strong emotional reactions from a specific group. For example, fans of a beloved franchise might give 1-star ratings if a sequel changes a character’s arc. Or critics might coordinate negative reviews to influence public opinion. These aren’t objective judgments-they’re reactions.

Are user reviews better than critic scores?

It depends. Critics are trained to evaluate technical quality-cinematography, editing, narrative structure. Users rate based on personal enjoyment. If you want to know if a film is well-made, trust critics. If you want to know if you’ll like it, trust users who match your taste. The best approach is to check both: a 90% critic score with a 60% user score means it’s well-made but not for everyone.

What’s the most accurate rating system available today?

The most accurate systems use behavioral data-what you watch, how long you watch, when you pause or skip. Platforms like TasteDive and Letterboxd (with its “Taste Match” feature) analyze your history and compare it to others with similar patterns. These systems predict enjoyment with over 80% accuracy, far better than simple star averages.

Should I trust movies with 5-star ratings from 10 people?

No. A 5-star rating from 10 people is statistically meaningless. It could be a group of friends, a marketing campaign, or bots. Reliable ratings need volume: at least 200 reviews, preferably 500+. Look for distribution too-if 80% are 5-star and 20% are 1-star, it’s polarizing, not universally loved.